AI Agent Frameworks Compared: LangChain vs CrewAI vs AutoGen

Compares LangChain, CrewAI, and AutoGen across architecture model, use case fit, developer experience, production-readiness, and cost — with specific guidance on which framework to choose for different agent task types and a frank assessment of where each breaks down.

AI Agent Frameworks Compared: LangChain vs CrewAI vs AutoGen

An AI agent framework is a library that orchestrates LLM calls, tool use, memory, and multi-step reasoning into a structured execution loop — enabling a model to take sequences of actions toward a goal rather than responding to a single prompt. LangChain, CrewAI, and AutoGen are the three most widely used frameworks in 2026, each with a distinct architecture model and a different fit for different task types. Choosing the wrong one creates unnecessary complexity; choosing the right one accelerates development significantly.

How AI Agents Work

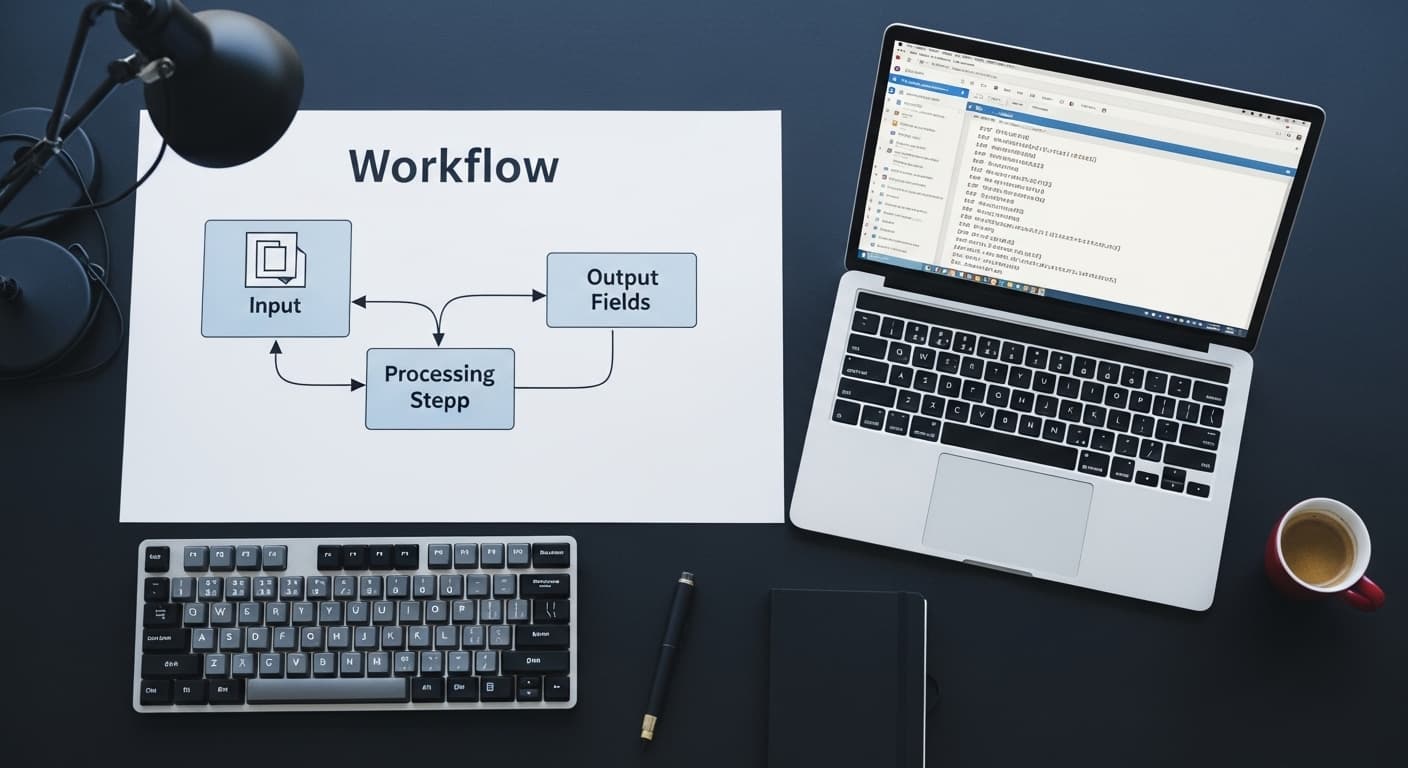

An AI agent is an LLM equipped with tools (functions it can call) and a reasoning loop (plan → act → observe → repeat) that allows it to take multi-step actions toward a goal. A single LLM call answers a question. An agent with web search, code execution, and file writing tools can research a topic, write and execute code, and produce a formatted report — autonomously across 10–20 steps.

The framework provides the scaffolding: tool registration, execution loop management, memory, and output parsing.

LangChain

Architecture: Chain-based and agent-based. LangChain Expression Language (LCEL) for composing deterministic chains. LangGraph (a LangChain subproject) for stateful, graph-based agent workflows with explicit state management.

Strengths: Largest ecosystem (hundreds of integrations), most mature RAG pipeline tooling, LangGraph gives fine-grained control over agent state and execution flow, strong community and documentation.

Weaknesses: High abstraction overhead makes debugging difficult, rapid API changes have historically broken production pipelines between versions, overkill for simple single-step LLM integrations.

Best for: RAG pipelines, complex multi-step agents requiring explicit state management (use LangGraph), applications integrating with many different tools and data sources.

CrewAI

Architecture: Role-based multi-agent orchestration. You define a crew of agents, each with a role (Researcher, Writer, Critic), a goal, and a backstory. Agents collaborate on a task, passing outputs between each other in a defined sequence or hierarchy.

Strengths: Intuitive mental model for multi-agent workflows, fast to prototype (a multi-agent pipeline can be built in hours), good for tasks that naturally decompose into specialist roles.

Weaknesses: Less control over execution flow than LangGraph, production-readiness is lower (error handling and retry logic require more custom work), role-based abstraction becomes a constraint for tasks that don't fit the team-collaboration metaphor.

Best for: Content research and generation pipelines, workflows that decompose into distinct specialist steps, rapid prototyping of multi-agent systems.

AutoGen (Microsoft)

Architecture: Conversational multi-agent framework. Agents communicate through message-passing, simulating a conversation to solve a task. A human-proxy agent can be included for human-in-the-loop workflows.

Strengths: Strongest model for human-in-the-loop agent systems, code execution is a first-class feature (agents write and execute code iteratively, review output, fix errors), strong Microsoft ecosystem integration.

Weaknesses: Conversational model can be inefficient for tasks that don't benefit from agent-to-agent dialogue, higher latency per task, smaller documentation and community than LangChain.

Best for: Code generation and execution tasks, data analysis agents, workflows requiring human review and approval at defined checkpoints, Microsoft-stack environments.

Framework Comparison

| Factor | LangChain | CrewAI | AutoGen |

|---|---|---|---|

| Architecture | Chains + graph agents | Role-based multi-agent | Conversational multi-agent |

| Best task type | RAG, complex stateful agents | Specialist role pipelines | Code execution, human-in-loop |

| Ecosystem size | Very large | Medium | Medium |

| Production readiness | High (LangGraph) | Medium | Medium |

| Debugging ease | Low (high abstraction) | Medium | Medium |

| Human-in-loop support | Possible (LangGraph) | Limited | Strong |

When to Use Each

Use LangChain when: Building a RAG pipeline, you need fine-grained control over agent state and execution flow (use LangGraph specifically), or you need to integrate with a wide variety of tools and data sources. Magehire's AI automation consulting uses LangChain + LangGraph for production agents requiring explicit state management.

Use CrewAI when: Your task naturally decomposes into specialist roles, you need to prototype quickly, or the team is newer to agent development.

Use AutoGen when: The task involves iterative code writing and execution, you need genuine human-in-the-loop approval at defined points, or you're in a Microsoft-stack enterprise environment with Azure OpenAI.

Use none of the above when: The task is a single-step LLM call or a simple two-step chain. Direct API calls are more maintainable and faster to execute than any framework for simple use cases.

The Case for a Custom Agent Architecture

For production systems needing reliability at scale, all three frameworks eventually require customization around error handling, retry logic, logging, and observability. At that point, a lightweight custom agent loop — a few hundred lines of Python calling the LLM API directly with explicit tool dispatch logic — is often more maintainable than the framework abstraction.

The frameworks are most valuable for prototyping. For long-running production systems, evaluate whether the framework abstraction is earning its complexity cost.

Ready to Build a Production AI Agent?

Framework choice is the first decision — not the last. Magehire helps teams select the right architecture, build the pilot, and transition from prototype to production with the observability and error handling that agent systems require. Schedule a strategy session to design your agent architecture.

?Frequently Asked Questions

Keep Reading

Explore more insights from our team

How to Automate Business Processes With LLMs in 2026

Step-by-step framework for identifying which business processes are LLM-automatable, designing the pipeline architecture, selecting orchestration tools (LangChain, n8n, custom), and building validation layers that make outputs production-safe.

How to Build an MVP Without a Technical Cofounder

Covers the four realistic paths for non-technical founders to build an MVP (no-code, low-code, freelancer, agency), how to evaluate each by scope and budget, how to avoid the most common mistakes, and what to look for when hiring technical help without being able to evaluate the code yourself.

Scale Your Project

Ready to build high-performance software? Our experts in New York handle the technical heavy lifting so you can focus on growth.

Get a Free Consultation